In this post, we explore how to build a real-time RAG app with up-to-date information from your files stored in Google Drive or Sharepoint. This means that your chatbot will always have access to the most recent version of your knowledge base—no manual pipeline reruns needed. By the end of this tutorial, you’ll use Pathway and LlamaIndex to build a RAG chatbot that instantly updates.

Why Pathway?

Pathway is an open data processing framework. It allows you to easily develop data transformation pipelines and ML apps that work with live data sources. Pathway listens to your documents for changes, additions, and removals. It handles loading and indexing without ETL.

Pathway offers an indexing solution that is always up-to-date without the need for traditional ETL pipelines. It can monitor several data sources (such as files, S3 folders, and cloud storage) and provide the latest info to your LLM app.

This means you don’t need to worry about:

- Checking files to see if there are any changes

- Parsing PDFs, Word documents, or other text files

- Transforming, embedding documents, and loading them into a vector database

Once updates are made to the files that make up your knowledge base, the updated content is immediately re-indexed — you don’t have to deal with rerunning the pipeline.

App overview

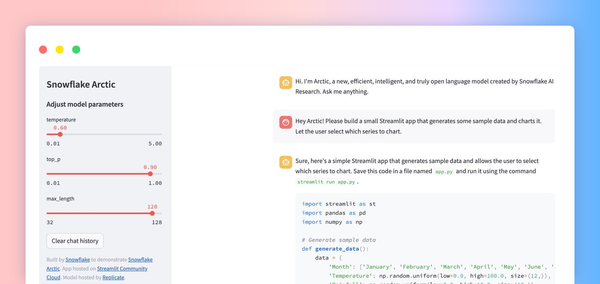

This demo consists of three parts.

- For up-to-date knowledge and information retrieval from the knowledge base’s documents, Pathway’s vector store is used.

- LlamaIndex creates the RAG pipeline and offers chat memory.

- Streamlit powers the easy-to-navigate user interface.

Tutorial: Creating a real-time RAG app with Pathway + LlamaIndex

Prerequisites

- An OpenAI API key (check out our instructions if you don’t already have one)

- A Pathway instance (the hosted version is provided free for the demo)

1. Adding data to the knowledge base

Pathway can listen to many sources simultaneously, such as local files, S3 folders, cloud storage, and data streams. In this example, you’ll add example documents to your pipeline by uploading files to a Google Drive registered to Pathway as a source. You can also check out the full docs on Pathway’s Google Drive connector.

For this demo, a Google Drive folder is provided for you to upload files. To test the app, we’ll ask our assistant questions about Pathway and it will respond based on the available files in the Google Drive folder.

See pathway-io for more information on available connectors and how to implement custom connectors.

2. Building a Pathway-powered chatbot

Retriever

First, import the necessary modules for the app.

from llama_index.retrievers import PathwayRetriever

from llama_index.query_engine import RetrieverQueryEngine

from llama_index.chat_engine.condense_question import CondenseQuestionChatEngine

from rag import chat_engine

Initialize the retriever with the hosted Pathway instance and create the query engine:

PATHWAY_HOST = "api-pathway-indexer.staging.deploys.pathway.com"

PATHWAY_PORT = 80

retriever = PathwayRetriever(host=PATHWAY_HOST, port=PATHWAY_PORT)

Chat Engine

We use CondenseQuestionChatEngine to create the RAG chatbot with LlamaIndex. One advantage of this chat engine is that it uses the context provided in the conversation history to write the search query. This results in answers that are more contextually relevant.

For further improvements to the pipeline, you can modify the chat engine type, prompt, and other parameters. For simplicity, we’ll use the default settings.

chat_engine = CondensePlusContextChatEngine.from_defaults(

retriever=retriever,

verbose=True,

)

3. Creating the UI with Streamlit

Create a title for the app and initialize session state values for the chatbot’s message history and the chat engine.

st.title("Pathway + LlamaIndex")

if "messages" not in st.session_state.keys():

st.session_state.messages = [

{"role": "assistant", "content": "Hi, ask me a question. My knowledge is always up to date!"}

]

st.session_state.chat_engine = chat_engine

Prompt the user for a question, store any user input in session state, and print messages from the user and the assistant.

if prompt := st.chat_input("Your question"):

st.session_state.messages.append({"role": "user", "content": prompt})

for message in st.session_state.messages:

with st.chat_message(message["role"]):

st.write(message["content"])

If the last message is from the user and the assistant is preparing an answer, create a st.spinner widget. Add the message content and role to the message history.

if st.session_state.messages[-1]["role"] != "assistant":

with st.chat_message("assistant"):

with st.spinner("Thinking..."):

response = st.session_state.chat_engine.chat(prompt)

st.write(response.response)

message = {"role": "assistant", "content": response.response}

st.session_state.messages.append(message)

Running the app

On Streamlit Community Cloud

The demo is hosted on Streamlit Community Cloud here. This version of the app uses Pathway's hosted document pipelines.

On your local machine

- Clone this repository locally.

- Create a

.envfile under the root folder to store your OpenAI API key. This demo uses the OpenAI GPT model to answer questions. - You also need a Pathway instance for vector search. For local deployment, see the vector store guide and Pathway Deployment.

- Run

streamlit run ui.py.

Congrats! Now you’re ready to chat with your documents and any file updates will be reflected by your app in real-time, thanks to Pathway.

Summing up

In this tutorial, you created and deployed a real-time RAG chatbot app. You also learned how easy it is to use Streamlit, LlamaIndex, and Pathway together, thanks to LlamaIndex’s Pathway Retriever. The end result is a RAG app that always has access to the most up-to-date version of your knowledge base.

Comments

Continue the conversation in our forums →